Building a perceptron on paper

If you are an AI enthusiast and an engineer at heart, chances are you want to know what’s inside a neural network.

Everybody learns differently. My favourite way is to learn by doing: to get a real, physical feeling for what it means to teach a neural network, what weights are, and why people say neural networks can be hard to interpret.

One more thing, we’re not going to use a computer for this one. We will use only pen and paper to train our own perceptron, one “neuron” that will learn to say “yes” and “no” at the right time.

You will not need any special education for this one - if you can multiply and add numbers, you can do it!

What are we going to do? #

A perceptron is the simplest linear binary classifier. In easy words - it’s a special equation that can tell us “yes” or “no”, and you can teach it yourself!

“Linear” means for us that our perceptron is not going to handle every “yes/no” problem, but only a certain subset of them. But that’s okay for our exercise.

Let’s get to it! #

In general, the formula for a perceptron is going to be:

\(y = \operatorname{sign}\left(\sum_{i=1}^{N} w_i x_i + b\right)\)This looks complex already, so we’ll be building a version of the perceptron that uses just two inputs, so the formula becomes:

\(y = \operatorname{sign}\left(\ w_1 x_1 + w_2 x_2 + w_0\right)\)In other words, we will have to do two multiplications and three additions - sounds doable!

(small change - usually, the bias parameter is called b, but I’ve renamed it to w0 for consistency)

Why the sign function? #

You’ve noticed that I’m using a function called sign. It simply means - if the result is more than zero, produce one, otherwise produce zero.

We need it because our perceptron only says “yes” or “no”, we’ll code it as 1 and 0.

Such function that makes the neural network output only the options we want, is called an activation function. In our case, our activation function is sign.

A challenge for our perceptron #

To teach our perceptron, we’ll need to give it a decent task. In our case, the perceptron will learn to imitate the MYSTERY BOX.

Here’s how the box works:

The box is a mystery to our perceptron, but not to you.

It contains the logical “AND” operator. Here’s how it works:

If you put these X1 and X2 numbers in the box |

You’ll get this Y result |

|---|---|

X1 = 0, X2 = 0 |

0 |

X1 = 0, X2 = 1 |

0 |

X1 = 1, X2 = 0 |

0 |

X1 = 1, X2 = 1 |

1 |

In other words, both inputs have to be 1 for the box to produce 1, otherwise it always makes 0. Simple enough.

Let’s see how long will it take for our brave little perceptron to figure it out (spoiler alert - five epochs!)

Untaught perceptron #

In our perceptron’s formula, you’ve noticed three numbers:

\(w_0, w_1, w_2\)They are called weights (this is also what they put in “weight files” that you download from the internet when you want to run an AI model locally).

When the perceptron starts fresh, all these weights are equal to zero. The perceptron is pretty meaningless now, but it’s ready to learn!

“Learning” means that these weights will become other numbers. Which numbers? Depends on what our perceptron has learned!

How to teach it #

Teaching a perceptron is two steps:

- Let it calculate the answer. We’ll call it

YporYpredicted. - If it’s wrong, show it the right answer. We’ll call it

Ytor the true value ofY.

Let’s write some more formulae, it’s going to be fun soon, I promise.

\(error = y_t - y_p\\\) \(w_1 \to w_1 + error \cdotp x_1\\\) \(w_2 \to w_2 + error \cdotp x_2\\\) \(w_0 \to w_0 + error\)Let’s go #

Grab a piece of paper and a pen. I know, you think - “let me just read it, I’ll get it, I’m not stupid”.

You are not, but trust me, you’ll get much more fun with it if you do it yourself.

Anyway, let’s get started.

Epoch 1 #

Ah yes, forgot to tell you. An “epoch” is one cycle of showing the perceptron all our learning examples.

Let’s show our perceptron the numbers.

x1 = 0, x2 = 0. The true answer, Yt is 0.

Come on, perceptron, predict something!

The formula is:

\(y = \operatorname{sign}\left(\ w_1 x_1 + w_2 x_2 + w_0\right)\)All weights are 0, so our formula becomes:

Wow, prediction correct, no need to change anything! If this goes like this until the end, it’ll be my easiest blog post ever!

Let’s try more:

(0, 0) -> 0 (correct)

(0, 1) -> 0 (correct)

(1, 0) -> 0 (correct)

Now let’s do (1, 1):

Whoops. That’s not correct, the right answer is 1.

It’s teaching time.

Let’s repeat the formulae:

\(error = y_t - y_p, \quad i.e. \enspace error = 1 - 0\\\) \(w_1 \to w_1 + error \cdotp x_1, \quad i.e. \enspace w_1 = 0 + 1 \cdotp 1 = 1\\\) \(w_2 \to w_2 + error \cdotp x_2, \quad i.e. \enspace w_2 = 0 + 1 \cdotp 1 = 1\\\) \(w_0 \to w_0 + error, \quad i.e. \enspace w_0 = 0 + 1 = 1\)At the end of the Epoch 1 the perceptron learned something! Is it going to be enough to now guess the MYSTERY BOX right? (spoiler alert: NO)

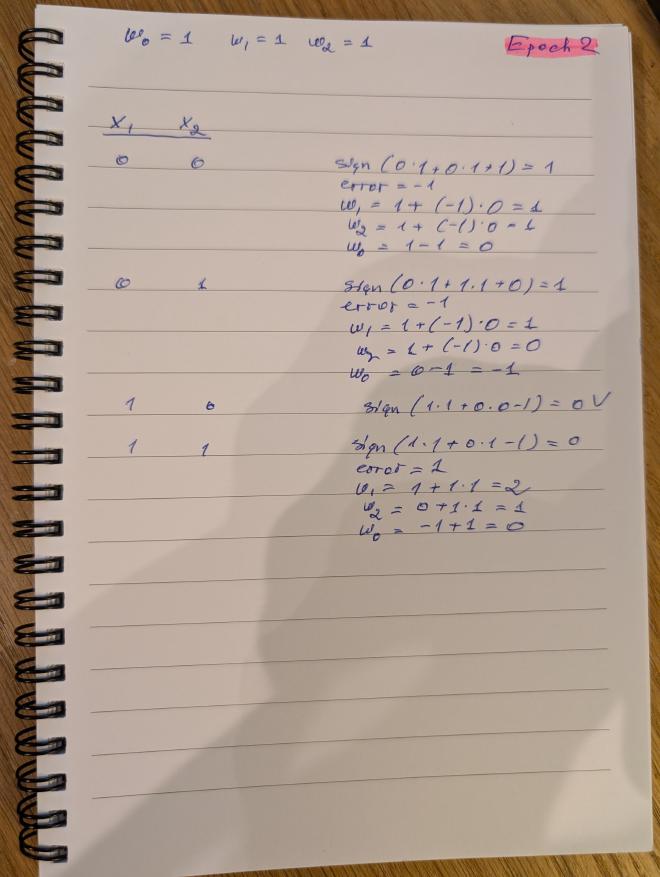

Epoch 2 #

I’ll now use a shorter notation to show how our perceptron learns. Remember, it takes it six epochs to learn, and I’m a lazy person.

Repeat the steps on paper, and use the table to verify if you are still following.

| Stage | New w1 |

New w2 |

New w0 |

|---|---|---|---|

| Before Epoch start | 1 |

1 |

1 |

X1 |

X2 |

Yp |

Yt |

error |

New w1 |

New w2 |

New w0 |

|---|---|---|---|---|---|---|---|

0 |

0 |

1 |

0 |

-1 |

1 |

1 |

0 |

0 |

1 |

1 |

0 |

-1 |

1 |

0 |

-1 |

1 |

0 |

0 |

0 |

✅ | 1 |

0 |

-1 |

1 |

1 |

0 |

1 |

1 |

2 |

1 |

0 |

This training epoch had a lot of fluctuation, each mistake immediately changed the perceptron weights.

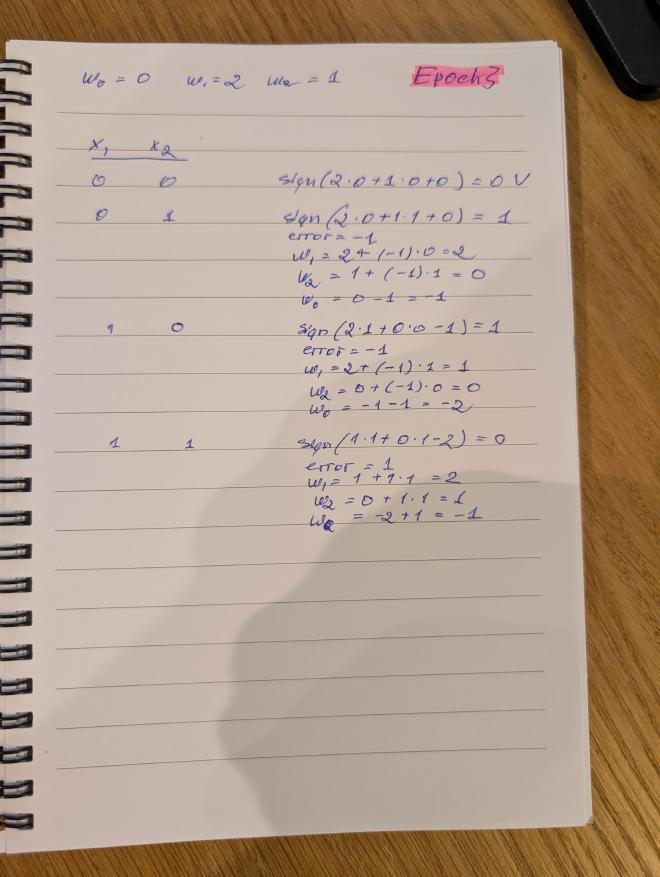

Epoch 3 #

| Stage | New w1 |

New w2 |

New w0 |

|---|---|---|---|

| Before Epoch start | 2 |

1 |

0 |

X1 |

X2 |

Yp |

Yt |

error |

New w1 |

New w2 |

New w0 |

|---|---|---|---|---|---|---|---|

0 |

0 |

0 |

0 |

✅ | 2 |

1 |

0 |

0 |

1 |

1 |

0 |

-1 |

2 |

0 |

-1 |

1 |

0 |

1 |

0 |

-1 |

1 |

0 |

-2 |

1 |

1 |

0 |

1 |

1 |

2 |

1 |

-1 |

This training epoch was as unstable as the one before. Will we ever converge? (spoiler: YES)

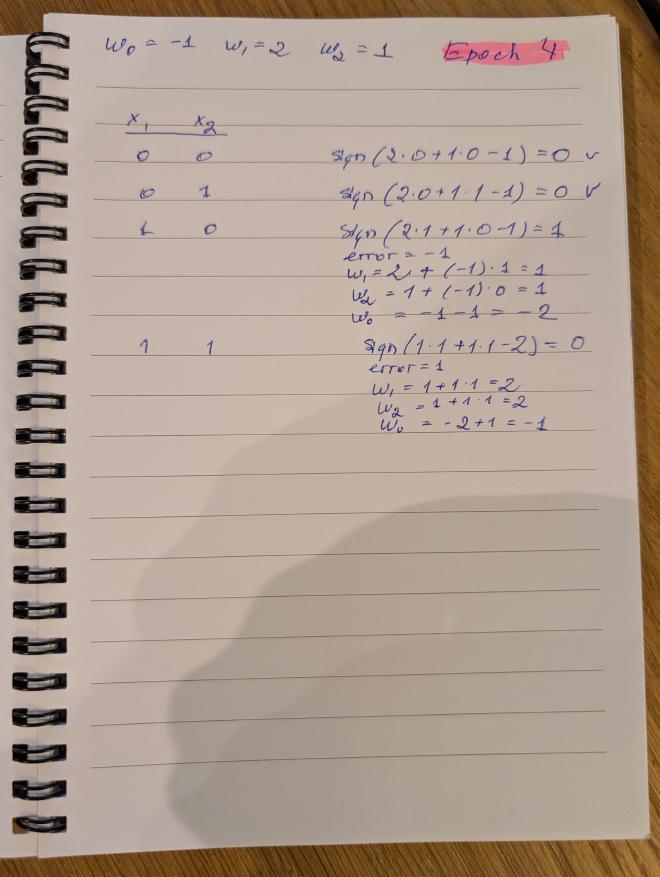

Epoch 4 #

| Stage | New w1 |

New w2 |

New w0 |

|---|---|---|---|

| Before Epoch start | 2 |

1 |

-1 |

X1 |

X2 |

Yp |

Yt |

error |

New w1 |

New w2 |

New w0 |

|---|---|---|---|---|---|---|---|

0 |

0 |

0 |

0 |

✅ | 2 |

1 |

-1 |

0 |

1 |

0 |

0 |

✅ | 2 |

1 |

-1 |

1 |

0 |

1 |

0 |

-1 |

1 |

1 |

-2 |

1 |

1 |

0 |

1 |

1 |

2 |

2 |

-1 |

This epoch shows the first signs of stability.

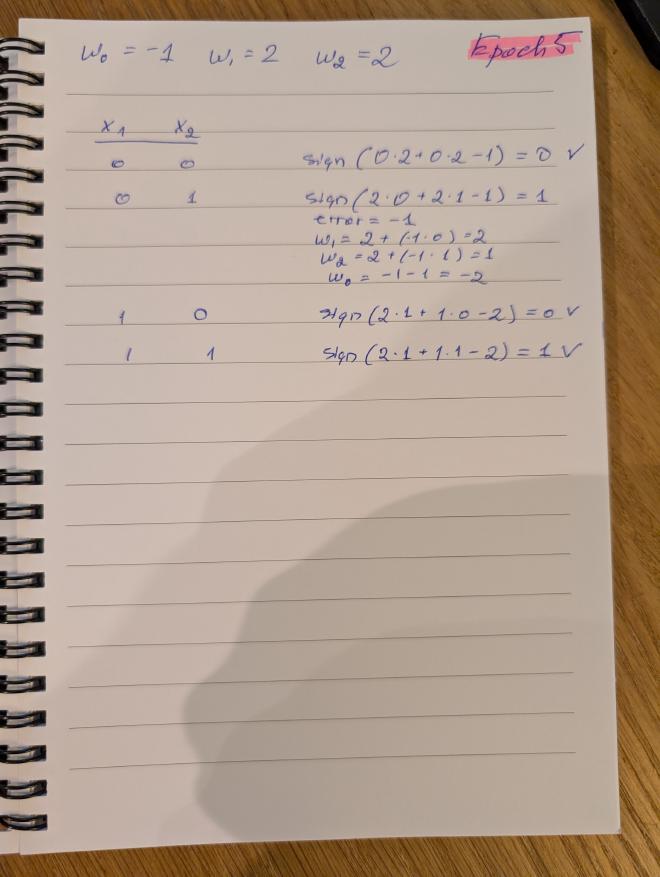

Epoch 5 #

| Stage | New w1 |

New w2 |

New w0 |

|---|---|---|---|

| Before Epoch start | 2 |

2 |

-1 |

X1 |

X2 |

Yp |

Yt |

error |

New w1 |

New w2 |

New w0 |

|---|---|---|---|---|---|---|---|

0 |

0 |

0 |

0 |

✅ | 2 |

2 |

-1 |

0 |

1 |

1 |

0 |

-1 |

2 |

1 |

-2 |

1 |

0 |

0 |

0 |

✅ | 2 |

1 |

-2 |

1 |

1 |

1 |

1 |

✅ | 2 |

1 |

-2 |

This epoch definitely made things a lot better!

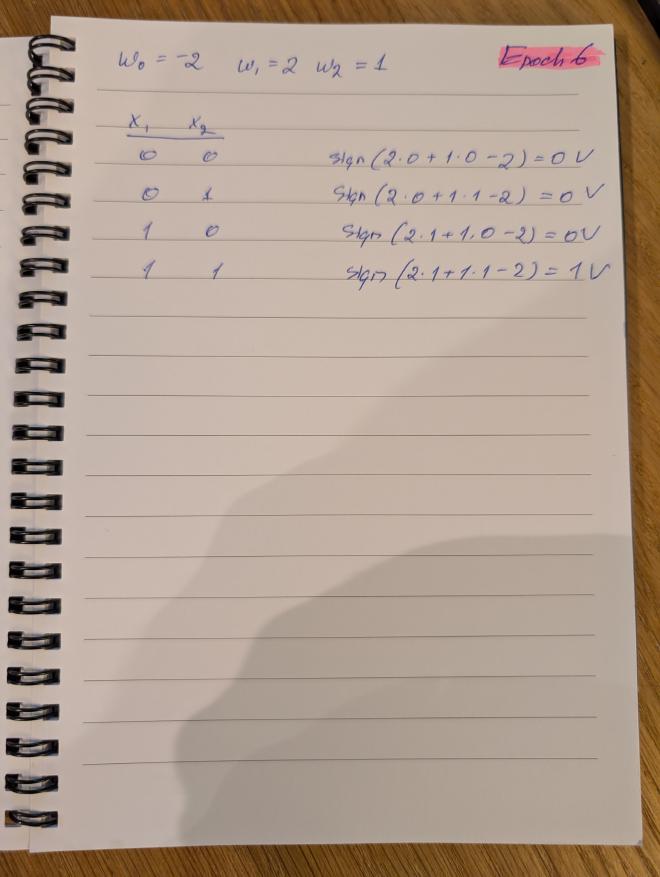

Epoch 6 #

| Stage | New w1 |

New w2 |

New w0 |

|---|---|---|---|

| Before Epoch start | 2 |

1 |

-2 |

X1 |

X2 |

Yp |

Yt |

error |

New w1 |

New w2 |

New w0 |

|---|---|---|---|---|---|---|---|

0 |

0 |

0 |

0 |

✅ | 2 |

1 |

-2 |

0 |

1 |

0 |

0 |

✅ | 2 |

1 |

-2 |

1 |

0 |

0 |

0 |

✅ | 2 |

1 |

-2 |

1 |

1 |

1 |

1 |

✅ | 2 |

1 |

-2 |

In this last epoch we could enjoy a fully trained perceptron that imitates the MYSTERY BOX perfectly.

Some observations at the end #

It didn’t require a computer #

For many people, machine learning is more about computers than mathematics (it’s in the name, “machine”, duh!) However, I find it extremely useful to demystify the process by returning back to pen and paper.

Occam’s razor cuts away all the unnecessary parts of the process - consciousness, reasoning, and other human-like characteristics were not necessary for our little formula to learn to simulate the logical “AND” operator.

It’s hard to explain #

The numbers we’ve ended up with - 2, 1, and -2 have nothing to do with logical “AND”. The formula is rather small, so we can still inspect it and figure out what it does.

Now imagine not three, but billions of weights, and you will see why it is extremely hard to correlate them to real-life concepts that a neural network is dealing with.

It scales - with layers #

Many such “perceptrons” used in a network, make a real functional neural network that is capable of processing much more complex inputs and producing much more complex results.

A neural network is not just a bag of perceptrons. Instead, these mathematical units are arranged in layers, where each layer transforms the result a little, and passes it on to the next layer.

By “layer” I simply mean that you calculate a set of formulae first, then take their result, and pass to the next set of formulae, and so on. All of them have their own weights, and train similarly to the process above.

Of course, the LLMs #

Nowadays, “AI” often means “an LLM”. How do these work? They speak human languages, not produce numbers.

This part starts simply: text is converted into numbers. Not usually whole words, but smaller pieces that in the LLM world are called tokens. For example, a token can be a word, part of a word, punctuation mark, or common phrase.

Then each token is represented not just by one number, but by a long list of numbers. This list tries to capture how that token behaves in language. From that point on, the model is doing mathematics on numbers, just like our perceptron - only on a much larger scale.

Are things really that simple? #

No, not really.

Where they are that simple is that there’s a strict deterministic mathematical model behind all AI models. You can re-create ChatGPT on paper, you’ll just need a lot of paper, a good supply of pens, and a very long life.

Where it isn’t that simple is the modern models’ structure and the learning process. Today’s exercise could hopefully help you to get a better, more palpable feeling of how the maths works, but the real thing needs more complex calculations.

The end. ■